Traversing a tree structure often involves writing a recursive function. However, Python isn’t the best language for this purpose. Therefore I started flattening the tree into a key-value dictonary structure. Logically it is still a tree, but it is physically stored as a dictionary. Therefore it is now easier to write a simple loop to traverse it.

Previously I was working on making it to deal with multi-dimensional dataset. In this iteration I mainly flatten the tree structure into a dictionary, and also fixed the threshold code to choose better leaf nodes.

The code now lives at github. It is currently named as lann, with the ‘l’ mainly stands for ‘learn’ to indicate this is not intended to be used in production. At the time of writing, the revision requires a lot of clean up to be at least readable. Also I have included some experimental code in the comments to be explored in the future.

Another reason I wanted to change the persistence strategy is to see if it is feasible to make it run concurrently. However, in my limited test the multithreading code speeds up neither the tree building nor the searching part. The usage of closure is also removed, as Python is refusing to pickle them for some reason.

However, one thing that did work better is the threshold value. Now I have fixed it to include leaf nodes from both sides of the plane if the query point lies very close to it. Previously it would only consider both cases only when the point has negative distance within threshold value. So now it is possible to have better result in higher dimensional space.

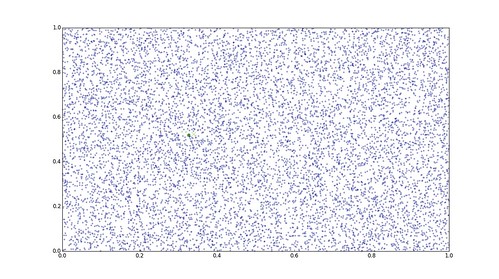

threshold=0.0, global nearest denoted by ‘+’, ann denoted by ‘X’

In the visualization above, the nearest point is chosen by considering only one leaf (members colored in green). However, the nearest point that we are interested in does not fall within the leaf node.

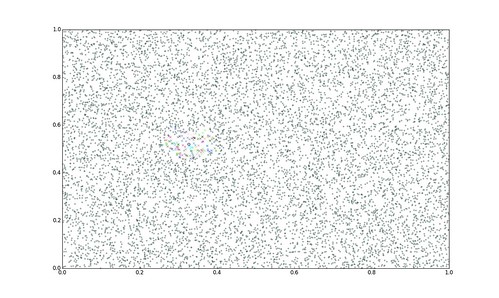

threshold=0.05, each color nearer to the query (the dot) indicates cluster membership

If the threshold value is increased to 0.05, more neighbouring clusters are now being included. Each of the considered cluster is coloured differently (while the others are coloured the same).

Actual program output for reference

So this is all for the brief update on the experimental code for now.